Vendor lock-in is an old and well discussed issue. Some people don’t care about it all, jump right in. Others avoid it like a plague. And then there are those who allow it, with some very careful considerations.

I have always been on the side of avoiding vendor lock-in by all costs. But lately, with all the SaaS offerings and cloud providers, I feel like the line becomes a lot more blurred.

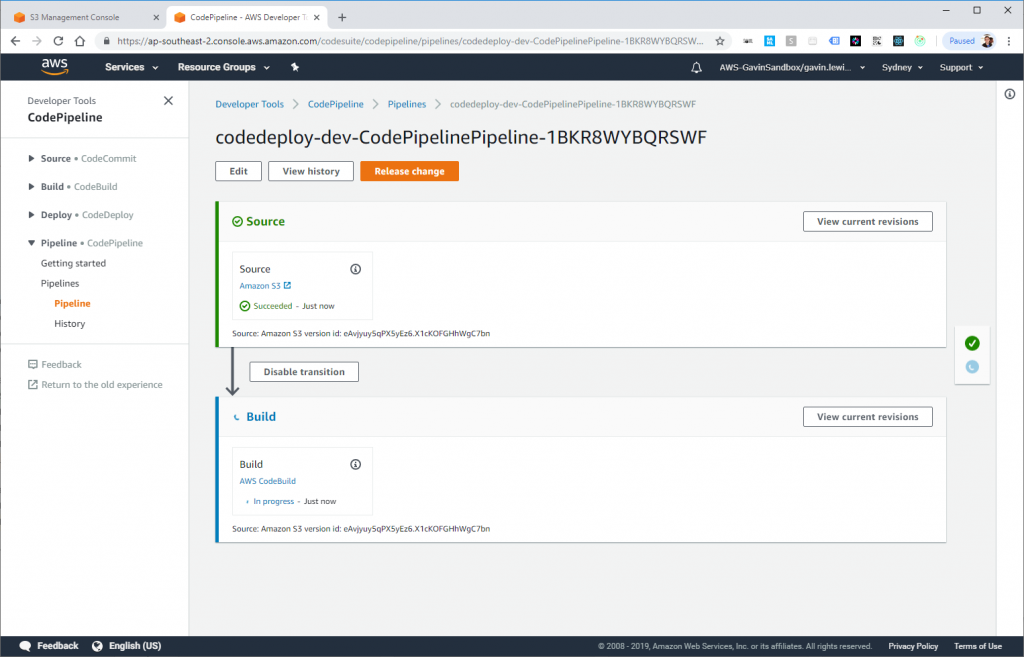

Initially, when I started using Amazon AWS, I approached it exclusively as an IaaS, setting up my own servers in such a way that I would be able to move to another vendor in a heartbeat. These days, I’ve grown to trust Amazon a lot more. But I still feel uneasy about some of the lock-in.

“Cloud Irregular: IAM Is The Real Cloud Lock-In” is an interesting take on the cloud lock-in. It found the comparison of the Amazon IAM (Identity and Access Management) to the Microsoft Active Directory particularly insightful.

To illustrate this point, we have to look no farther than the nine-hundred-pound gorilla of the IAM jungle, which continues to be Microsoft’s ActiveDirectory. I’m not sure I even know what ActiveDirectory is anymore, to be honest. Is it a cloud service? A “hybrid identity” provider? A flippin’ Linux domain controller? The answer to all of those questions appears to be “yes, if that is what you want”, which is why AD implementations will surely keep an army of Microsoft “IT Pros” busy for a couple more decades.

Here’s what ActiveDirectory is not: easy to migrate off of.