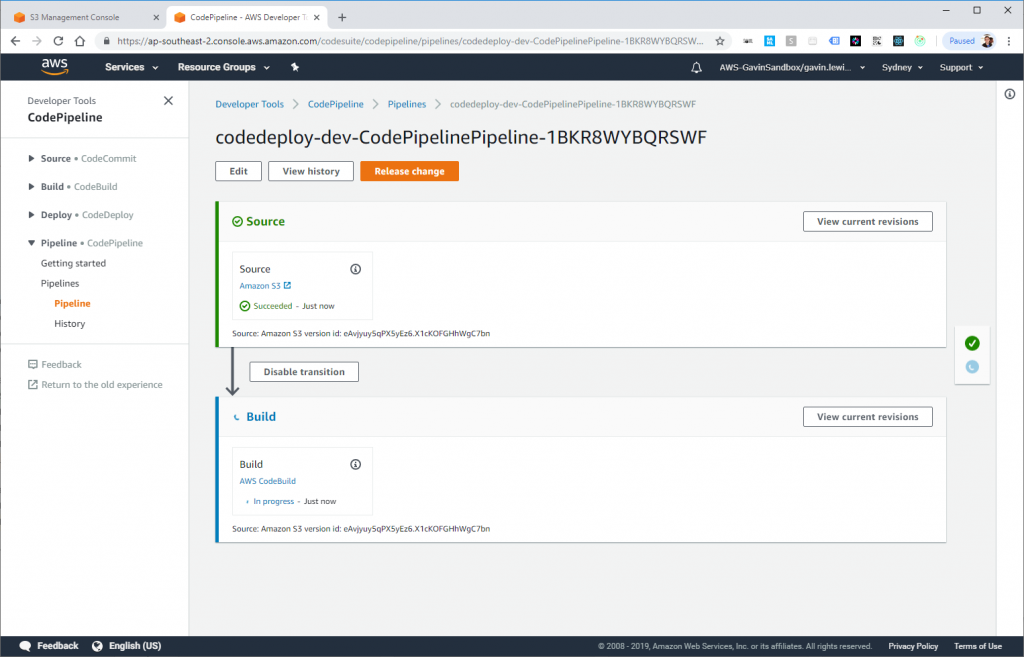

“How To Build a Serverless CI/CD Pipeline On AWS” is a nice guide to some of the newer Amazon AWS services, targeted at developers and DevOps. It shows how to tie together the following:

- Amazon EC2 (server instances)

- Docker (containers)

- Amazon ECR (Elastic Container Registry)

- Amazon S3 (storage)

- Amazon IAM (Identity and Access Management)

- Amazon CodeBuild (Continuous Integration)

- Amazon CodePipeline (Continuous Delivery)

- Amazon CloudWatch (monitoring)

- Amazon CloudTail (logs)

The examples in the article are for setting up the CI/CD pipeline for .NET, but they are easily adoptable for other development stacks.