These days I am once again improving my backup routines. After I ran out of all reasonable space on my Dropbox account last year, I’ve moved to homemade rsync scripts and offsite backup downloads between my server and my laptop. Obviously, with my laptop being limited on disk space, and not being always online, the situation was less than ideal. And finally I grew tired of keeping it all running.

A fresh look around at backup software brought in a new application that I haven’t seen before – HashBackup. It’s free, it has the simplest installation ever (statically compiled), it runs on every platform I care about and more, and it supports remote storage via pretty much any protocol. It also features nice backup rotation plans and an interesting way of pushing backups to remote storage with sensible security.

Once I settled with the software, I had to sort out my disk space issue. Full server backup takes about 15 GB and I want to keep a few of them around (daily, weekly, monthly, yearly, etc). And I want to keep them off the server. Not being too enthusiastic about having a home server on all the time, and not having enough space and uptime on my laptop, I’ve decided to check some of those storage solutions in the cloud. Yeah, I know…

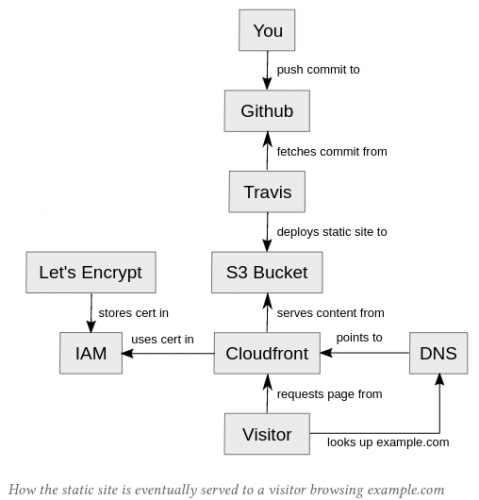

My choice fell upon Amazon S3. Not for any particular reason either. They seem to be cheap, fast, reliable and quite popular. And HashBackup also supports them too. So I’ve spent a couple of days (nights actually) configuring all to my liking and now I see the backups are running smoothly without any intervention on my end.

Before I will finalize my decision, I want to see the actual Amazon charge. Their prices seem to be well within my budget, but there are many variables that I might be misinterpreting. If they will charge what they say they will charge, I might free up much more space across all my computers, I think.

As far as tips go, I have two, if you decide to follow this path:

- When configuring HashBackup, you’ll find that documentation on the site is awesome. However it will keep referring to dest.conf file that you’d use to configure remote destinations. Example files are not part of online documentation, however, you’ll find a few example files (for each type of remote destination) in the software tarball, in the doc/ folder.

- When configuring Amazon S3, you’d probably be tempted to have a more restrictive access policy then those offered by Amazon. For instance, you’d probably want to limit access by folder, rather by bucket. Word of advice: start with Amazon’s police first and make sure everything works. Only then switch to your own custom policy. Otherwise, you might spend too much time troubleshooting a wrong issue.