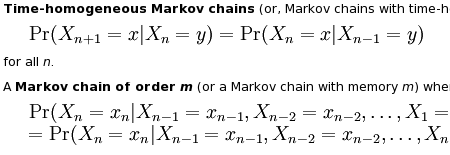

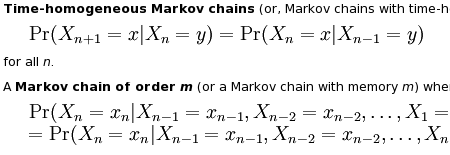

I’ve been hearing about “Markov chain” for long enough – it was time I learned something. Wikipedia seemed like a good starting point. I have to warn you though, be careful with scrolling on that page, because you can easily end up looking at something like this:

If you aren’t a rocket scientist or someone who solves integrals for fun, by all means, use the contents menu or jump directly to the Applications section.That’s where all the fun is. Here are some quotes for you to get interested and for me to remember.

Physics:

Markovian systems appear extensively in physics, particularly statistical mechanics, whenever probabilities are used to represent unknown or unmodelled details of the system, if it can be assumed that the dynamics are time-invariant, and that no relevant history need be considered which is not already included in the state description.

Testing:

Several theorists have proposed the idea of the Markov chain statistical test, a method of conjoining Markov chains to form a ‘Markov blanket’, arranging these chains in several recursive layers (‘wafering’) and producing more efficient test sets — samples — as a replacement for exhaustive testing.

Queuing theory:

Claude Shannon’s famous 1948 paper A mathematical theory of communication, which at a single step created the field of information theory, opens by introducing the concept of entropy through Markov modeling of the English language. Such idealised models can capture many of the statistical regularities of systems. Even without describing the full structure of the system perfectly, such signal models can make possible very effective data compression through entropy coding techniques such as arithmetic coding. They also allow effective state estimation and pattern recognition

Internet applications:

The PageRank of a webpage as used by Google is defined by a Markov chain.

and

Markov models have also been used to analyze web navigation behavior of users. A user’s web link transition on a particular website can be modeled using first or second order Markov models and can be used to make predictions regarding future navigation and to personalize the web page for an individual user.

Statistical:

Markov chain methods have also become very important for generating sequences of random numbers to accurately reflect very complicated desired probability distributions – a process called Markov chain Monte Carlo or MCMC for short. In recent years this has revolutionised the practicability of Bayesian inference methods.

Gambling:

Markov chains can be used to model many games of chance. The children’s games Snakes and Ladders, Candy Land, and “Hi Ho! Cherry-O”, for example, are represented exactly by Markov chains. At each turn, the player starts in a given state (on a given square) and from there has fixed odds of moving to certain other states (squares).

Music:

Markov chains are employed in algorithmic music composition, particularly in software programs such as CSound or Max. In a first-order chain, the states of the system become note or pitch values, and a probability vector for each note is constructed, completing a transition probability matrix

Markov parody generators:

Markov processes can also be used to generate superficially “real-looking” text given a sample document: they are used in a variety of recreational “parody generator” software

Markov chains for spammers and black hat SEO:

Since a Markov chain can be used to generate real looking text, spam websites without content use Markov-generated text to give illusion of having content.

This is one of those topics that makes me feel sorry for sucking at math so badly. Is there a “Markov chain for Dummies” book somewhere? I haven’t found one yet, but Google provides quite a few results for “markov chain” query.