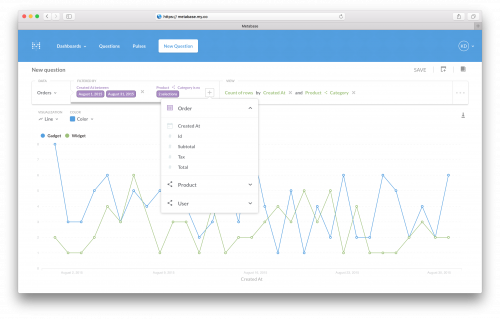

Metabase is an Open Source business intelligence and analytics tool. It supports a variety of databases and services as sources for data, and provides a number of data querying and processing tools. Have a look at the GitHub repository as well.

And if you want a few alternatives or complimenting tools, I found this list quite useful.