If you are playing around with the artificial intelligence and machine learning, have a look at this list of “Top 20 APIs You Should Know In AI and Machine Learning“. These are nicely organized by subject as well:

If you are playing around with the artificial intelligence and machine learning, have a look at this list of “Top 20 APIs You Should Know In AI and Machine Learning“. These are nicely organized by subject as well:

There is a tonne of information both online and offline on the subjects of Artificial Intelligence and Machine Learning. Some resources provide just the bare minimum introductory information. Others dive deep into specifics. But I haven’t seen one that is as easy to follow as Jason Mayes’ Machine Learning 101 slides deck.

It’s so easy in fact that is probably useful to even the non-technical people who want to know how this whole AI thing works. I particularly liked how the slides are separated into green background for general knowledge and blue background for in-depth knowledge, making them easy to skip. I also find the balance between the details in the slides and the further reading / watching resources to be just right.

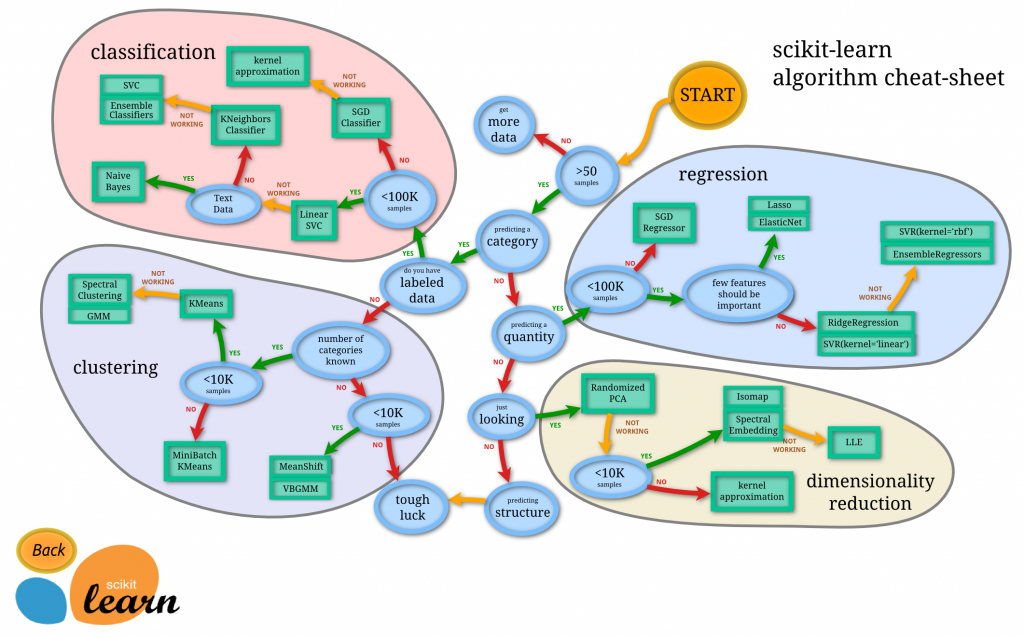

Here’s a good collection of cheatsheets for anyone involved with Big Data and machine learning. Whether you are already well versed in the subject, or just starting, I’m sure you’ll find something useful.

And while we are on the subject of machine learning, check out this repository for examples in Python, theory and math explanations behind many algorithms involved.

Here’s a super fun list of things that artificial intelligence figured out by gaming the rules, like inconsistent and incomplete specifications, bugs, and other bits that humans frequently assume and ignore.

Some examples to get you started are:

This is truly thinking outside the box!

Amazon Machine Learning Blog announces some very significant improvements to the Amazon Rekognition – their service for image and video analysis. These particular changes are improving the face recognition technology.

Amazon Machine Learning Blog announces some very significant improvements to the Amazon Rekognition – their service for image and video analysis. These particular changes are improving the face recognition technology.

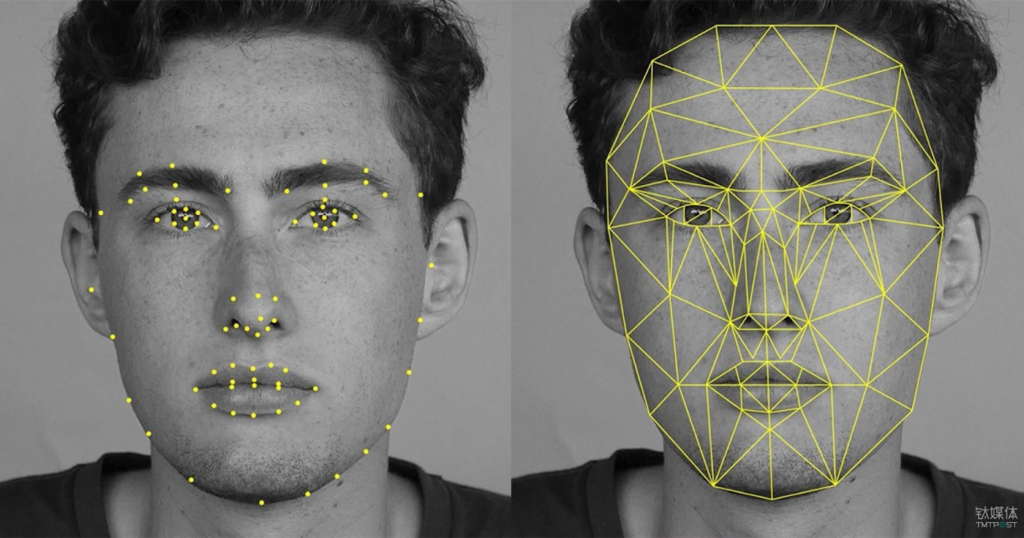

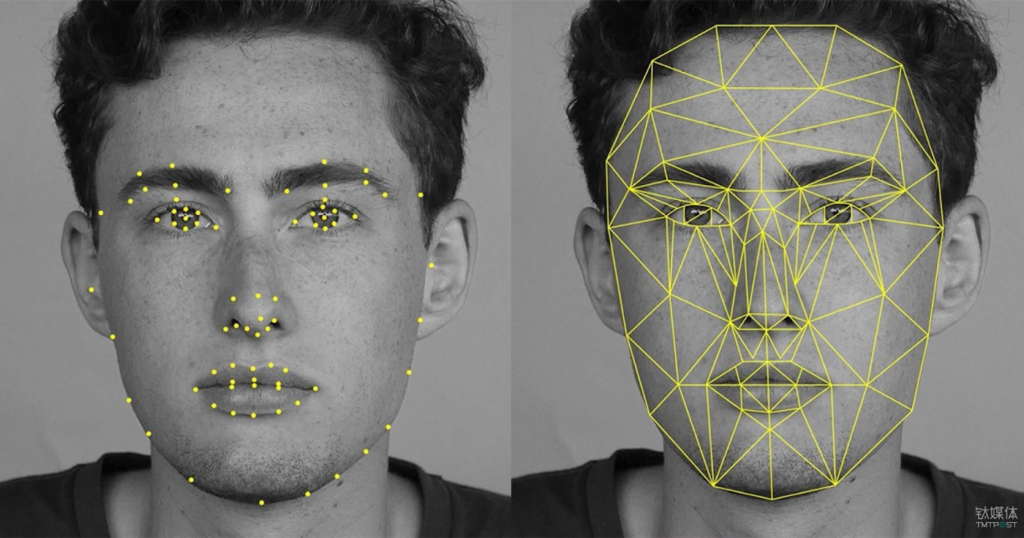

“Face detection” tries to answer the question: Is there a face in this picture? In real-world images, various aspects can have an impact on a system’s ability to detect faces with high accuracy. These aspects might include pose variations caused by head movement and/or camera movements, occlusion due to foreground or background objects (such as faces covered by hats, hair, or hands of another person in the foreground), illumination variations (such as low contrast and shadows), bright lighting that leads to washed out faces, low quality and resolution that leads to noisy and blurry faces, and distortion from cameras and lenses themselves. These issues manifest as missed detections (a face not detected) or false detections (an image region detected as a face even when there is no face). For example, on social media different poses, camera filters, lighting, and occlusions (such as a photo bomb) are common. For financial services customers, verification of customer identity as a part of multi-factor authentication and fraud prevention workflows involves matching a high resolution selfie (a face image) with a lower resolution, small, and often blurry image of face on a photo identity document (such as a passport or driving license). Also, many customers have to detect and recognize faces of low contrast from images where the camera is pointing at a bright light.

With the latest updates, Amazon Rekognition can now detect 40 percent more faces – that would have been previously missed – in images that have some of the most challenging conditions described earlier. At the same time, the rate of false detections is reduced by 50 percent. This means that customers such as social media apps can get consistent and reliable detections (fewer misses, fewer false detections) with higher confidence, allowing them to deliver better customer experiences in use cases like automated profile photo review. In addition, face recognition now returns 30 percent more correct ‘best’ matches (the most similar face) compared to our previous model when searching against a large collection of faces. This enables customers to obtain better search results in applications like fraud prevention. Face matches now also have more consistent similarity scores across varying lighting, pose, and appearance, allowing customers to use higher confidence thresholds, avoid false matches, and reduce human review in applications such as identity verification. As always, for use cases involving civil liberties or customer sentiments, where the veracity of the match is critical, we recommend that customers use best practices, higher confidence level (at least 99%), and always include human review.

Have a look at their blog post for some examples of what the machine can recognize as a face. Some of these are difficult enough to treat many humans, I think.